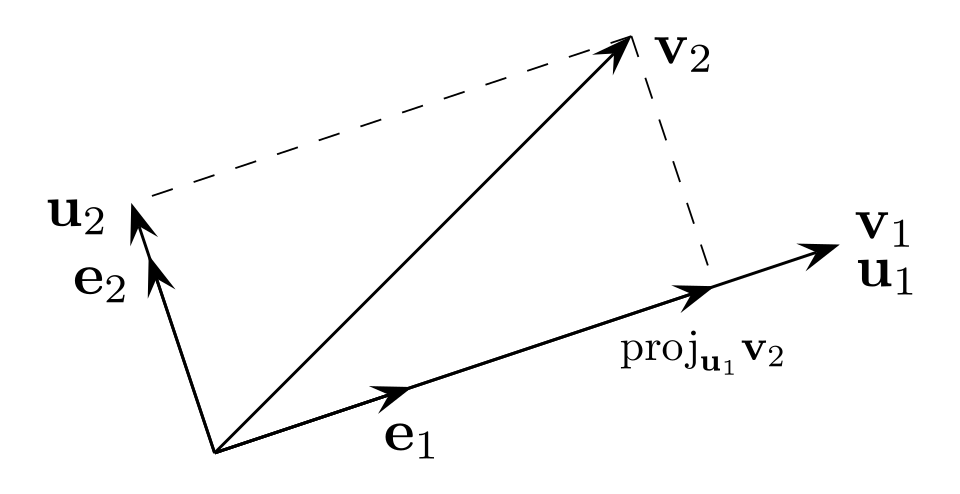

procedure for constructing an orthonormal basis from a set of linearly independent vectors. At each step, subtract off component of new vector that lies along all previously accepted directions.

Algorithm

Given linearly independent vectors , produce an orthonormal set :

then normalize: .

The projection step removes the component of in the direction, leaving only what’s orthogonal to everything already in the basis.

QR Decomposition

Think of the input vectors as columns of a matrix . Gram-Schmidt produces exactly :

- has the orthonormal vectors as columns

- is upper triangular — the entry is (the projection coefficients from step onto direction ), and the diagonal is

So QR decomposition and Gram-Schmidt are the same computation, just described from different angles. Numerically, Modified Gram-Schmidt or Householder reflections are preferred for stability.

Why It Preserves Volume

The key property used in Lyapunov exponent computation: orthogonalizing the vectors into preserves the volume of the parallelepiped they span. The lengths before normalization are what get logged to accumulate the Lyapunov exponents — no information is thrown away, it’s just repackaged into an axis-aligned ellipsoid.

In Lyapunov Exponent Computation

See Computing Lyapunov Exponents. At each iterate , we have vectors that are no longer orthogonal. Gram-Schmidt gives:

The new orthonormal basis is used as input for the next step, and feeds into the running average for .

iterative re-orthogonalization prevents the numerical collapse that would otherwise occur as the most-expanding direction dominates all vectors.