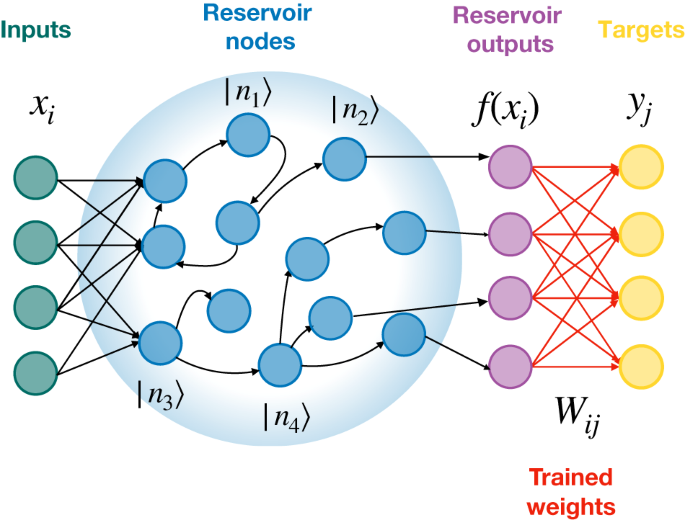

efficient Machine Learning framework based on Recurrent Neural Networks that process timeseries data by mapping input to high dimensional space, a “reservoir” of randomly connected neurons.

- only training that happens here is the training of the “readout layer”

- just has a weight off a neuron in the reservoir directly to the output layer.

- excels at pattern recognition, chaotic signal prediction, and speech recognition with lower computational costs

- turns out to be deeply connected to Fourier’s insight about building any signal from compositional building blocks. it all a matter of looking at the signals the right way.