The classic introduction to how Neural Networks can model behavior.

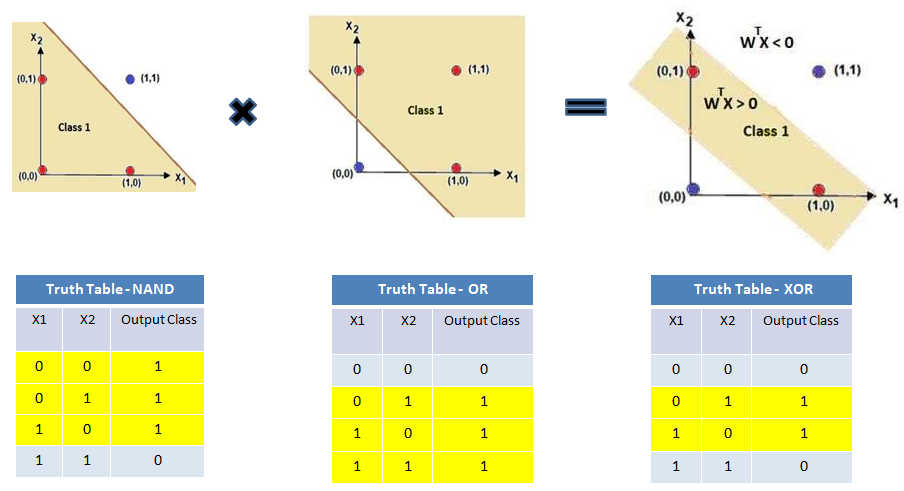

a Perceptron computes for inputs , weights , threshold . geometrically, this is a hyperplane cut through the boolean hypercube, so any boolean function whose true-inputs can be separated from false-inputs by a hyperplane is representable.

linearly separable gates (single perceptron):

| gate | weights | threshold |

|---|---|---|

| AND | ||

| OR | ||

| NOT |

only special case is XOR: the two positive inputs can’t be linearly separated from , so no single perceptron works. you need a hidden layer, e.g. .

in general, any boolean function on bits can be expressed as a depth-2 network (DNF): one hidden layer for each minterm, OR at the output. this gives a universal approximation result for the discrete case, though the number of neurons can be exponential in .