- can be done in Brian2, probably the easiest way to go about it at this point, seems to be the most stable neural computation library and has a CUDA version

- Artem Kirsanov seems to like them a lot, he highlighted the “hardware lottery”, where Spiking neural nets didnt take off because it did not fit into our standard von neumann architecture, and not parellizable in the sense like GPUs allow for

https://en.wikipedia.org/wiki/Spiking_neural_network

- Bio inspired, energy efficient. Process information in sparse, discrete spikes in a continuous time environment.

- Would be ideal if the hardware permitted them to scale, however they do still excel in low power edge ai and temporal pattern recognition.

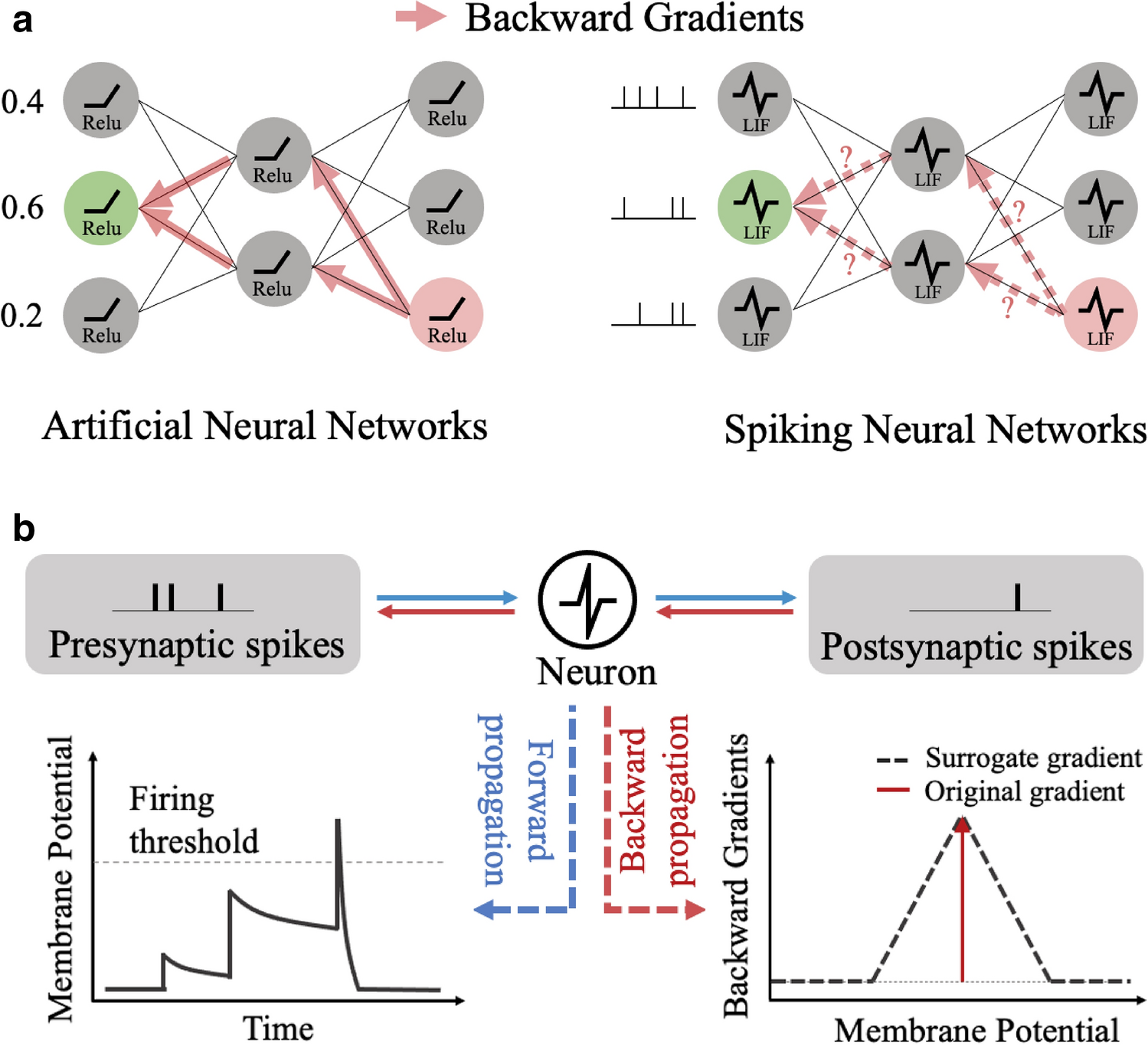

Much harder to train since spiking is non differentiable so Gradient Descent is not viable.